Deep Learning vs Neural Networks

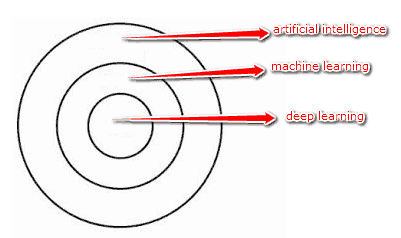

Data science has become a part of our lives directly and indirectly. Its wide range of applications in our daily lives includes intelligent devices like Amazon’s Alexa, Siri, meteorological forecasting, customized advertisements, chatbots, recommendations on different websites, and so much more. It is really fascinating how we all have shifted to this era of data. It has revolutionized every industry and organization in some way. And, it continues to do so by adding new functionalities and providing better outputs and customer satisfaction. The multi-dimensional approach to data science has made life easier on so many levels. Nonetheless, it is not fully explored and offers a great scope of research and development. No doubt there is a high rise in job opportunities in his domain. Terms like Machine Learning, Artificial Intelligence, Neural Networks, and Deep Learning are often used interchangeably and lead to confusion among common masses. Here, we address two of these technologies, deep learning, and neural networks. Let us consider them individually.